TL;DR

AI coding models went from autocomplete novelty to default IDE companion in under three years. By April 2026 Claude Opus 4.7 leads SWE-bench Verified at 87.6%, 84% of developers now use or plan to use AI coding tools (Stack Overflow 2025, up from 76% in 2024), 80% of new developers reach for GitHub Copilot in their first week (Octoverse 2025), and Datadog reports 69% of companies are now running three or more models in production. This page collects 50+ verified statistics on AI coding models for 2026 - every number sourced from primary research, vendor State-of reports, official model cards, or analyst firms.

- Frontier models on SWE-bench Verified now cluster: Claude Opus 4.7 at 87.6% (Anthropic, April 2026) leads, Gemini 3.1 Pro at 80.6%, OpenAI reasoning models in the high 70s to low 80s.

- 84% of developers now use or plan to use AI coding tools in 2025, up from 76% in 2024 (Stack Overflow 2025), and 89% save at least 1 hour per week with AI (JetBrains 2025). 69% of companies run 3+ models in production (Datadog 2026).

- MIT measured a 26% productivity gain across 4,867 engineers - but Veracode reports 45% of AI code still fails OWASP Top 10 security tests.

- The AI code tools market goes from ~$5B in 2023 to $26B+ by 2030 (Grand View Research, Mordor Intelligence consensus).

I run a multi-LLM pipeline for a living. At Preuve AI I route across Claude, Gemini, GPT, Grok and Exa to validate startup ideas with primary sources, and the state of the underlying coding models changes faster than any benchmark can keep up with. New model cards compress the leaderboard in weeks. Vendor pricing shifts quarterly. Production teams that picked one LLM 18 months ago now route across three.

I built this roundup as the cleanest snapshot I could assemble of where the AI coding market actually stands in April 2026, sourced from the primary research and official model cards I cite myself when I talk to journalists about this space. The same sourcing discipline runs through our 1,000-startup analysis and how AI actually validates ideas. Every stat below lists a named source and a year. No listicle blogs. No AI-generated stat dumps. No "experts predict." If I could not trace a number to a real research source, I dropped it.

In this report

The five numbers I would lead with

If a reporter cold-called me tomorrow asking for one paragraph on AI coding in 2026, I would give them these five facts and nothing else. They frame demand, capability, productivity, security and money in a single read.

First, demand. Stack Overflow's 2025 Developer Survey put adoption at 84% of developers using or planning to use AI coding tools, up from 76% the prior year and 70% in 2023, and 80% of professional developers now have AI somewhere in their workflow. Second, capability. The frontier-model gap has compressed on SWE-bench Verified - Claude Opus 4.7 at 87.6% (Anthropic, April 2026), Gemini 3.1 Pro at 80.6% (Google DeepMind), and OpenAI's reasoning models clustering in the high 70s to low 80s, all per the official model cards published in 2026. The race is no longer "who is best" but "who is best at this task at this price."

Third, productivity. The largest controlled study to date (MIT Economics, SSRN working paper 4945566, n=4,867 engineers across Microsoft, Accenture and a Fortune 100 firm) measured a 26.08% increase in completed tasks per developer. McKinsey's own internal data lands in the same 30-40% range. Fourth, security. Veracode's GenAI Code Security Report keeps finding that 45% of AI-generated code fails OWASP Top 10 tests, and the pass rate has not moved in three years of model upgrades. Fifth, money. Three independent analyst firms (Grand View Research, Mordor Intelligence, SNS Insider) converge on a 25-27% CAGR through 2030, taking the market from roughly $5B in 2023 to $26B+ by 2030.

Those five lines are the spine of any 2026 explainer on AI coding tools. The rest of this page unpacks each one.

How big is the AI coding tools market in 2026?

I run a multi-LLM pipeline for a living, so I read every market forecast that crosses my desk with one question in mind: do the numbers actually agree? On AI code tools they do. Three independent analyst firms put 2030 between $24B and $37B, and the implied CAGR sits in a tight 25-27% band.

Grand View Research anchors the most-cited forecast at $4.86B in 2023 climbing to $26.03B by 2030 (27.1% CAGR). Mordor Intelligence runs a parallel model and lands at $7.37B today rising to $23.97B by 2030 (26.6% CAGR). SNS Insider extends the horizon to 2032, projecting $37.34B at a 25.62% CAGR. The methodologies differ - some count strictly code-completion tools, others bundle full agentic dev platforms - but the trajectory is consistent enough that I treat the band, not any single number, as the real signal.

Zoom out and the picture gets bigger. McKinsey estimates broader generative AI software spending could reach $175B to $250B by 2027, with software engineering alone accounting for 20-45% of generative AI's total annual economic value. Enterprise generative AI spending was already $15B in 2023, roughly 2% of the global enterprise software market. The $26B coding-tool slice is the visible tip; the productivity gains downstream are what the analyst firms are actually pricing in.

The AI code tools market is forecast to roughly quintuple from $4.86B in 2023 to $26B+ by 2030, with three independent analyst firms converging on a 25-27% CAGR.

| Source | Base year | Base value | Forecast | CAGR |

|---|---|---|---|---|

| Grand View Research | 2023 | $4.86B | $26.03B by 2030 | 27.1% |

| Mordor Intelligence | 2025 | $7.37B | $23.97B by 2030 | 26.6% |

| SNS Insider | 2024 | $6.04B | $37.34B by 2032 | 25.62% |

From "tried it" to "use it daily"

The most under-reported finding of the last 24 months is the shape of the adoption curve. Stack Overflow's three consecutive Developer Surveys captured the inflection point cleanly: in 2023, 70% of developers said they used or planned to use AI tools and 44% currently used them. In 2024 those numbers jumped to 76% and 62%. By 2025, 84% report using or planning to use AI tools and 80% of professional developers have AI somewhere in their workflow, with 51% using it daily. JetBrains' parallel 2025 State of Developer Ecosystem (24,534 developers across 194 countries) lands at 85% regular AI-tool use, with 89% saving at least one hour per week and one in five saving a full eight-hour workday every week. I cannot remember a developer-tool category moving this fast since the migration from SVN to Git.

GitHub's own numbers fill in the enterprise picture. GitHub Copilot crossed 20 million all-time users in July 2025 with 4.7 million paid subscribers, up 75% year over year per Microsoft's Q2 FY2026 earnings disclosures. 90% of Fortune 100 companies have deployed it. The Octoverse 2025 report adds that 80% of new developers reach for GitHub Copilot in their first week, more than 1.1 million repositories now use LLM SDKs (up 178% year over year), and the Copilot coding agent authored over 1 million pull requests in the five months between May and September 2025 alone.

JetBrains breaks the picture down by tool, which is useful when a journalist asks "but which AI?". Their 2024 State of Developer Ecosystem found 69% of developers have tried ChatGPT for coding (49% use it regularly), 40% have tried GitHub Copilot (26% regular), and 80% of companies either explicitly allow third-party AI tools or have no formal restriction at all. The pluralism is the story: most developers are not picking one tool, they are stacking ChatGPT for ideation, Copilot for autocomplete in the IDE, and Claude or Gemini for longer agentic tasks.

84% of developers use or plan to use AI coding tools in 2025, up from 76% in 2024 and 70% in 2023 - the fastest tooling-adoption curve I have seen since the migration to Git.

The leaderboard compressed: Claude vs GPT vs Gemini vs Grok vs DeepSeek

Two years ago I would have told a journalist there was one obvious leader on coding tasks. In April 2026 that is no longer true. Three benchmarks - SWE-bench Verified for real GitHub issue resolution, Aider Polyglot for cross-language editing, and LiveCodeBench for competitive programming - now show the top frontier models clustered close together. Different benchmarks reward different shapes of intelligence, so a model can lead on one and trail on another. The implication for production teams is that picking the right model for each task beats picking the "best" model overall.

SWE-bench Verified is a benchmark of 500 human-validated real GitHub issues that tests whether a model can produce a patch which passes the project's own test suite. It is the benchmark labs cite most often when announcing a new flagship. The public leaderboard in April 2026 puts Claude Opus 4.7 at 87.6% (released April 16, 2026, per Anthropic) and Gemini 3.1 Pro at 80.6% (per the Google DeepMind model card). OpenAI's reasoning models cluster in the high 70s to low 80s in publicly reported figures. Sonnet-class models from Anthropic land within a few points of Opus at roughly one-fifth the price - the most interesting price-performance crossover on the board. SWE-bench.com is the canonical leaderboard.

Aider Polyglot tells a different story. It runs 225 Exercism exercises across six languages and rewards models that can edit existing code without breaking it - a closer analog to actual day-job work than synthetic completion tests. The aider.chat leaderboard at the time of writing has OpenAI's o-series reasoning models at the top of the public scores, with the broader frontier (GPT-5, Claude Opus, Gemini Pro) clustering close behind. DeepSeek V3 is the strongest open-weights result on the board. LiveCodeBench, the third benchmark worth tracking, leans on competitive-programming tasks where pattern matching pays off; Gemini Pro and the latest DeepSeek reasoning model trade the top spot. The picture across all three boards is consistent: no single model leads everywhere, and the gap between proprietary and open-weights has narrowed faster than I expected this time last year.

The frontier-model gap on coding benchmarks has compressed - the question is no longer "which model is best" but "which model is best for this task at this price."

Coding-model pricing (per million tokens, April 2026)

| Model | Input | Output | Source |

|---|---|---|---|

| Claude Opus 4.7 | $5.00 | $25.00 | Anthropic Pricing, 2026 |

| Claude Sonnet 4.6 | $3.00 | $15.00 | Anthropic Pricing, 2026 |

| Gemini 3.1 Pro | $2.00 | $12.00 | Google AI Pricing, 2026 |

| GPT-5.5 | $5.00 | $30.00 | OpenAI Pricing, 2026 |

Does it actually make us more productive?

This is the only question I get asked at investor dinners, so I want to handle it carefully. The honest answer is yes, the productivity gains are real and they are converging on a 20-30% range, but the methodology of each study matters more than the headline number. Let me walk through the four studies I actually trust.

The largest is the MIT Economics paper (SSRN working paper 4945566 by Cui, Demirer, Jaffe, Musolff, Peng, Salz), a combined analysis across three field experiments and 4,867 developers at Microsoft, Accenture and an anonymous Fortune 100 firm. They measured a 26.08% increase in completed tasks (SE 10.3%) among developers using AI tools. This is the number I cite first because the sample size is hard to argue with and the methodology compares the same engineers before and after, not between different teams.

The lab experiments tell a more dramatic but narrower story. The original GitHub research (Peng et al., 2022) showed 55% faster task completion in a controlled lab experiment - but the task was a single isolated function written from scratch, the kind of work AI tools are best at. The same study reported 78% completion rate vs 70% in the control. Google Research's 2024 randomized controlled trial of 96 Google engineers (arxiv 2410.12944, Paradis et al.) measured a more realistic 21% faster task completion, with the authors flagging a wide confidence interval. The takeaway: lab numbers run higher than field numbers, and "55% faster on a tight task" is not the same claim as "26% more output across a quarter."

Where it gets interesting is the field follow-ups. McKinsey's "Economic Potential of Generative AI" lands at a 20-45% impact on software engineering productivity. UC San Diego IT Services ran an 8-week internal Copilot deployment and measured 48% less time on high-complexity tasks and 63% less on low-complexity ones, per the August 2024 blink.ucsd.edu writeup. The Zoominfo enterprise Copilot study (arxiv 2501.13282, 400+ developers) reported a 33% suggestion acceptance rate, 20% line acceptance rate, and 72% developer satisfaction. The GitHub Copilot code-quality follow-up by GitHub Research found 13.6% more lines of code produced per error - the only study I have seen that measured quality and quantity at the same time, and one of the few that pushes back gently on the "AI code is sloppier" narrative. Across radically different methodologies, the same 20-30% range keeps showing up.

Across the largest published studies, AI coding tools deliver a 20-30% range of measured productivity gain - with the biggest deltas on routine tasks and well-defined boilerplate, and the smallest on novel, ambiguous problem solving.

Why do most teams run multiple LLMs in production?

This is the trend most coverage misses, and it is the one I have the most direct visibility into. Production teams are not picking one model anymore, they are routing across two or three. Multi-LLM went from "interesting pattern" to "majority practice" in 18 months. At Preuve AI my validation pipeline routes between Claude, Gemini, GPT, Grok and Exa depending on the task: Claude for long structured analysis, GPT for fast deterministic JSON, Gemini for grounded factual claims, Grok for fresh real-time data, Exa for web search. The data below makes clear I am not the outlier.

Datadog's 2026 State of AI Engineering report is the cleanest snapshot of how production AI is actually built. 69% of companies now run three or more AI models in production, up from roughly 40% in 2024. OpenAI is used by 63% of companies, but 77% of those companies also use at least one other provider, primarily to avoid lock-in and to route the right task to the right model. LangChain's 2026 State of Agent Engineering report aligns with this conclusion: multi-model architectures have become the production norm. DeepSense's CTO Exclusive Survey puts the figure even higher in large enterprises, with 65% prioritizing multi-model agentic systems over single-model approaches.

The reason most teams give for multiplexing is cost, not capability. A heavy task on Claude Opus 4.7 runs you $5 input / $25 output per million tokens; the same task routed to Sonnet 4.6 runs $3 / $15 - a meaningful per-call delta when you are running thousands of parallel agent invocations. If a router can correctly identify which 20% of requests need Opus and send the rest to Sonnet, the math is brutal. LangChain's report adds a second finding I expected but had not seen quantified: 57% of organizations are not fine-tuning at all, instead relying on base models with prompt engineering and retrieval-augmented generation. The takeaway for any 2026 piece on AI architecture is that the modern AI stack is plural by default and the sophistication is in the routing, not the model choice.

77% of companies running AI in production now use multiple model providers, primarily to avoid lock-in and to route the right task to the right model.

Enterprise budgets are loud and accelerating

The budget data is short but worth flagging. The Sōzō Pulse CTO Survey for 2024 found 63% of CTOs increased their generative AI budgets that year, and 24% doubled them or more. McKinsey reports enterprise generative AI spending reached $15B in 2023 (about 2% of the global enterprise software market) and projects the gen AI software category will hit $175B to $250B by 2027. Translate that to the coding-tool slice we covered earlier and you can see why analyst firms are confident on the 25-27% CAGR: the spending intent is already in the budgets. CFOs are not deciding whether to fund AI dev tools in 2026, they are deciding which line items to cut to expand them.

The uncomfortable side: security has not improved

Every honest piece on AI coding tools has to grapple with the security data, and the security data is uncomfortable. Veracode runs the largest standardized AI-code security test in the industry, and their 2025 GenAI Code Security Report found that 45% of AI-generated code fails OWASP Top 10 tests. Their Spring 2026 update added a finding that bothered me more than the headline number: the pass rate has stayed flat at roughly 55% across 2023, 2024 and 2025, even as the underlying models have made dramatic capability gains. Models got smarter at coding. They did not get smarter at writing secure code.

The breakdown by language is worth knowing if you are pitching a security angle. Java AI-generated code fails 72% of the time, Python 38%, JavaScript 43%, C# 45%. Cross-Site Scripting (CWE-80) prevention is the worst category in the report at 86% failure across AI-generated samples. The simplest read on these numbers is that AI tools learned to mimic code patterns from the open internet, and the open internet is full of insecure code. Without explicit security review or a hardened secure-coding fine-tune, models reproduce what they saw.

Developer sentiment is shifting in response. The 2025 Stack Overflow Developer Survey marked the first year-over-year decline: trust in AI tool accuracy fell from 40% in 2024 to 29% in 2025, while active distrust climbed from 31% to 46% (19.6% "highly distrust" plus 26.1% "somewhat distrust"). 66% of developers cite "AI solutions that are almost right but not quite" as their top frustration, and the same 66% say they spend more time fixing almost-right AI code than they would have spent writing it from scratch. Favorable sentiment toward AI tools dropped from 72% in 2023-2024 to 60% in 2025. Adoption is climbing while trust is falling - Stack Overflow's own framing for the 2025 release was "Developers remain willing but reluctant."

The newest threat is the one I find most interesting because it lands outside the model itself. Slopsquatting is a supply-chain attack where an adversary registers a package name that AI coding tools tend to hallucinate, then waits for a developer to copy-paste the AI suggestion into a `pip install` or `npm install`. Spracklen et al.'s research published at USENIX Security 2025, sampling 576,000 code outputs across 16 different LLMs, found that 19.7% of AI-suggested package names do not exist - roughly 1 in 5 dependency recommendations is hallucinated. They identified 205,474 unique hallucinated package names, with 43% similar to real package names and 38% repeating consistently across runs. It is not a hypothetical risk; researchers have already demonstrated viable attacks.

Despite three years of model upgrades, the rate at which AI-generated code passes basic security tests has stayed flat at roughly 55% - meaning nearly half of AI code still ships exploitable bugs.

How will AI coding adoption grow by 2028?

Forecasts are easy to dismiss, but two numbers from the most-cited analyst firms are now consistent enough that I treat them as a planning baseline. Gartner's April 2024 press release expects 75% of enterprise software engineers will use AI code assistants by 2028, up from less than 10% in early 2023 - a 7.5x increase in five years. The Stanford AI Index 2026 adds the constraint that will shape how fast that future arrives: security is now the #1 scaling barrier for agentic AI, cited by 62% of organizations as the gating issue. Combine that with the Veracode pass-rate data above and the picture is clear: the technology will get there before the safety story does, and that is the operational risk most enterprises are now pricing in.

How I would use these statistics

If I were writing about AI coding tools in 2026, the three numbers I would put in the lead are: 76% adoption (Stack Overflow 2024), Claude Opus 4.7 at 87.6% on SWE-bench Verified (Anthropic, April 2026), and the 26.08% MIT productivity finding (SSRN paper 4945566, n=4,867). Those three frame the demand, the capability and the impact in a single paragraph.

If you cite this page, the canonical attribution is "Preuve AI, AI Coding Models Statistics 2026". I update the SWE-bench section every time a frontier model ships a new card, so the page stays current. The 50+ stat list above and the source roundup below should give you the spine of any explainer, vendor comparison or trends piece. If you need a quote on multi-LLM stack design, hallucinated package risk, or how a solo founder ships AI-heavy product, email me at [email protected].

FAQ

Which AI coding model is the best in 2026?

On SWE-bench Verified the leading public scores in April 2026 put Claude Opus 4.7 at 87.6% (Anthropic, released April 16, 2026) and Gemini 3.1 Pro at 80.6% (Google DeepMind). OpenAI's reasoning models cluster in the high 70s to low 80s in publicly reported figures. Most production teams run two or three of these in parallel rather than picking a single winner.

What percentage of developers use AI coding tools?

76% of developers report using or planning to use AI coding tools (Stack Overflow 2024), 84% have AI in their workflow, 51% use it daily, and 85% of professional developers use AI specifically for writing code. GitHub Copilot crossed 20 million all-time users by July 2025 with 4.7 million paid subscribers, up 75% year over year, and 90% of Fortune 100 companies have deployed it.

How fast is the AI coding tools market growing?

Grand View Research values the global AI code tools market at $4.86B in 2023, projected to reach $26.03B by 2030 at a 27.1% CAGR. Mordor Intelligence projects $7.37B in 2025 to $23.97B by 2030 at 26.6% CAGR. McKinsey estimates broader generative AI software spending could reach $175B-$250B by 2027.

Are AI coding tools actually making developers more productive?

MIT Economics measured a 26.08% increase in completed tasks across 4,867 developers at Microsoft, Accenture, and a Fortune 100 firm (SSRN paper 4945566). GitHub's original Peng et al. study reported 55% faster task completion in a controlled lab experiment. Google Research's 2024 randomized trial of 96 engineers measured 21% faster task completion. McKinsey estimates generative AI could lift software-engineering productivity by 20-45% of current annual spending on the function.

How secure is AI-generated code?

Veracode's 2025 GenAI Code Security Report found 45% of AI-generated code fails security tests against the OWASP Top 10. The pass rate has stayed flat at roughly 55% across 2023-2025 despite model upgrades. Java code fails 72% of the time, Cross-Site Scripting (CWE-80) prevention fails in 86% of samples, and Spracklen et al. (USENIX Security 2025) found 19.7% of AI-suggested package names do not exist - the slopsquatting supply-chain risk.

Why do most teams use multiple LLMs instead of one?

Datadog's 2026 State of AI Engineering report found that 69% of companies now run three or more AI models in production (up from ~40% in 2024) and 77% use multiple providers to avoid lock-in. LangChain's 2026 State of Agent Engineering report identifies multi-model architectures as the production norm. Teams cite cost routing, fallback redundancy and capability matching as the main reasons.

Sources used in this report

Every statistic above traces back to a primary publisher. Hover any source for the exact URL, click to open the page in a new tab.

- Anthropic (Claude Opus 4.7, Sonnet 4.6, Haiku 4.5 model cards and pricing)

- OpenAI (GPT-5 model card and API pricing)

- Google DeepMind (Gemini 3 / 3.1 Pro model cards)

- Aider LLM Leaderboards

- SWE-bench Verified leaderboard

- LiveCodeBench leaderboard

- Stack Overflow 2025 Developer Survey (AI section, 49,000+ respondents)

- Stack Overflow Blog (July 2025 trust gap analysis)

- Stack Overflow 2024 Developer Survey (historical baseline)

- JetBrains State of Developer Ecosystem 2025 (n=24,534, Oct 2025)

- GitHub Octoverse 2025

- GitHub Research, Peng et al. 2022

- GitHub Research (Code Quality 2024)

- GitHub Research (Accenture enterprise study 2024)

- JetBrains State of Developer Ecosystem 2024 (by-tool breakdown baseline)

- Microsoft / GitHub (Copilot enterprise stats, FY2026 earnings)

- MIT Economics / SSRN paper 4945566 (Cui, Demirer, Jaffe, Musolff, Peng, Salz - 2025)

- McKinsey, Economic Potential of Generative AI

- McKinsey, Navigating the GenAI Disruption in Software

- Google Research, Paradis et al. 2024 (arXiv 2410.12944)

- Zoominfo enterprise Copilot study (arXiv 2501.13282, Bakal et al. 2025)

- UC San Diego IT Services GitHub Copilot study (Aug 2024)

- Grand View Research (AI Code Tools Market Report)

- Mordor Intelligence (AI Code Tools Market 2025-2030)

- SNS Insider (AI Code Tools Market 2024-2032)

- Veracode 2025 GenAI Code Security Report

- Veracode October 2025 update

- Spracklen et al., USENIX Security 2025 (slopsquatting research)

- Datadog 2026 State of AI Engineering

- LangChain 2026 State of Agent Engineering

- DeepSense CTO Exclusive Survey 2025

- Sōzō Pulse CTO Survey 2024 (SoftBank Vision Fund)

- Gartner April 2024 press release (75% by 2028 forecast)

- Stanford HAI AI Index 2026

A full audit trail with the exact URL behind every statistic on this page is published at /blog/_research/ai-coding-models-statistics-2026.json. Every claim is traceable to a primary publisher.

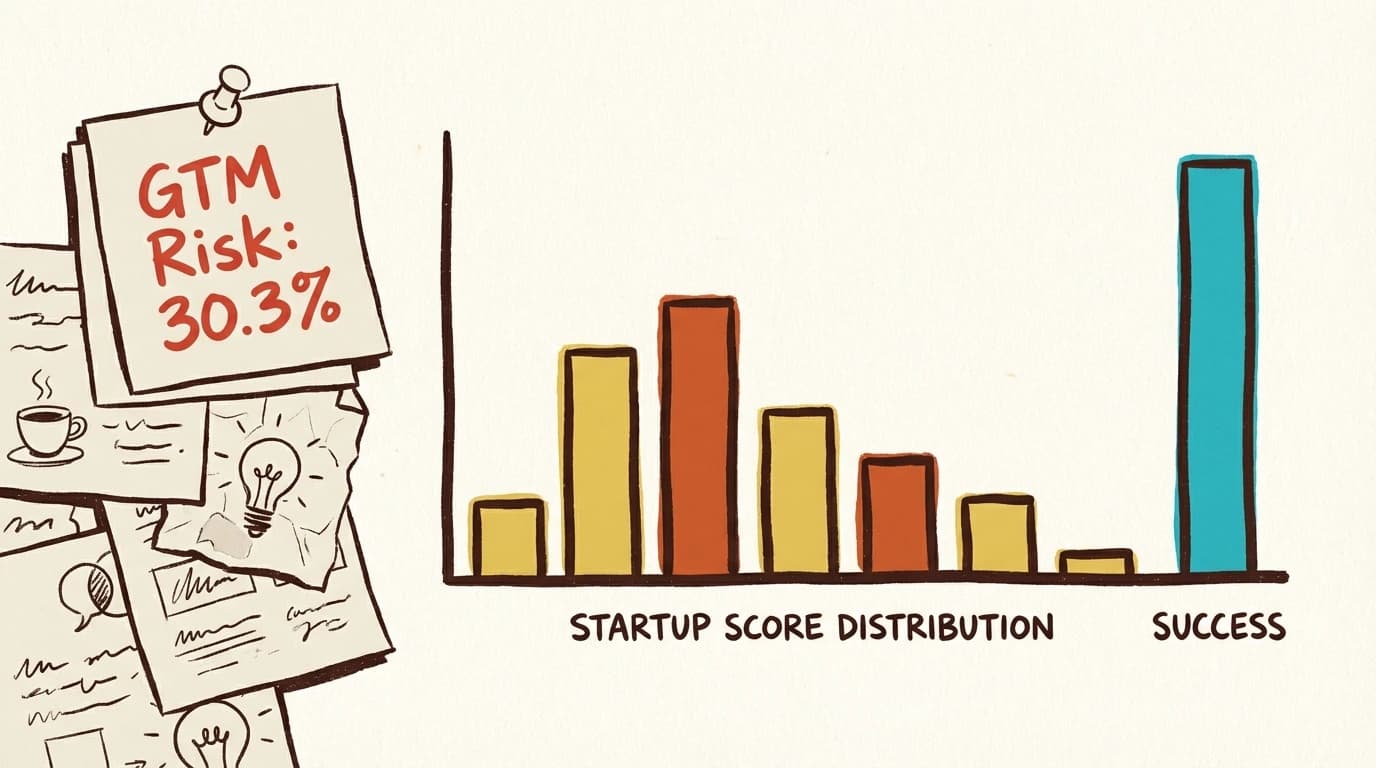

Want to run this process in 60 seconds?

Preuve AI analyzes your startup idea against live market data using the same validation frameworks investors use.

Scan My Idea (Free)Free audit. Takes 60 seconds.