TL;DR

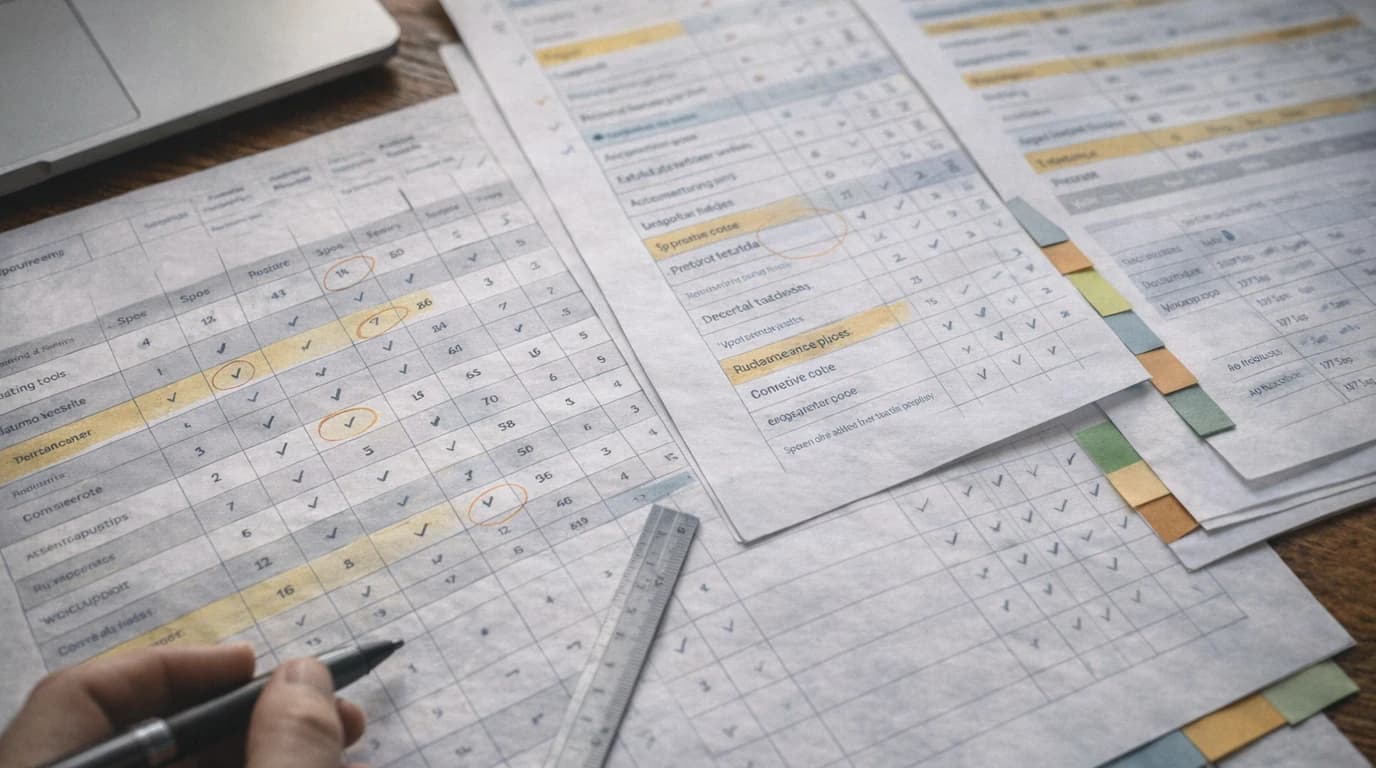

Most founders validate by collecting evidence that supports their idea. That's confirmation bias, not validation. This is a 7-day checklist designed to disprove your business idea using sourced data, buyer conversations, and pre-sales. If it survives the week, build it. 4,000+ ideas tested on this framework. 90% scored below 70.

Business idea validation is the process of testing whether a specific customer will pay for a specific outcome, using sourced market data and buyer conversations, before writing any code.

CB Insights' March 5, 2026 analysis of 431 VC-backed shutdowns puts poor product-market fit at 43% of failures. Not bad code. Not poor execution. The product never found a market.

The fix sounds simple: validate your idea before you build. But most founders get validation wrong. They don't skip the step. They do it in a way that confirms what they already believe.

Whether you call it a startup idea, business idea, or product concept, the job is the same: find out if a specific buyer has a painful problem, already tries to solve it, and will pay for a better option.

I've watched this happen across 4,000+ ideas scanned on Preuve AI (formerly Test Your Idea). The pattern is always the same: founders collect evidence that supports their thesis and ignore everything else.

This is a 7-day process I put together for testing a business idea using evidence, not optimism. Every step is designed to disprove your idea, not support it. If it survives the week, build it. If it doesn't, you saved yourself months.

The sequence here also fits the spirit of Steve Blank's customer development flow: validate with evidence before you confuse activity with traction.

What most founders get wrong about validation

Before the checklist, let me name the three lies that kill ideas before launch.

Lie 1: Confirmation bias disguised as research

You Google your idea. You find one Reddit thread where someone says "I wish this existed." You stop searching. That's not research. That's a lawyer building a case.

Real validation means actively looking for reasons NOT to build. Search for "why [your idea] failed." Search for "[competitor] shutting down." Search for "[your market] declining." If you only collect evidence that supports your thesis, you're not validating. You're building a comfort blanket.

Lie 2: Friend and family feedback

"I told my friends about my idea and they all said it's great."

Of course they did. They like you. They want to be supportive. They also have no idea what the market looks like, what competitors charge, or whether anyone would pay $29/month for this.

The friends-and-family trap isn't that people lie to you. It's that their feedback has zero predictive value. Someone saying "that sounds cool" is not a buying signal. Someone handing you money is.

Lie 3: AI confidence without sources

This is the 2026 version of the problem. You paste your idea into ChatGPT. It gives you a market size, competitor names, and a growth strategy. It sounds authoritative.

The issue: LLMs hallucinate. They invent competitor names that don't exist, fabricate market sizes with no source, and generate pricing data that's completely made up. They do this confidently, which makes it worse than no data at all. False data you trust is more dangerous than no data.

The rule for any AI-assisted validation: if you can't click through to the source, assume the number is wrong.

Day 0: Write the falsifiable claim

Before any research, force yourself to be specific. Fill this in:

"I believe [specific customer] will pay [price] for [outcome] because [pain]."

Examples:

- 1."I believe solo founders shipping their first SaaS will pay $29 for a sourced competitor analysis because they waste 10+ hours doing it manually and ChatGPT makes up the data."

- 2."I believe 5-30 person marketing agencies will pay $149/month for automated lead scoring because they spend 9+ hours/month on discovery calls that go nowhere."

If you can't fill in every bracket, you don't have an idea yet. You have a vibe. That's fine, but don't build on a vibe.

A falsifiable claim gives you something to test. "Solo founders will pay $29" is testable. "People would probably find this useful" is not.

Day 1: Define the exact buyer

"Founders" is not a customer segment. "Teams" is not a customer segment. You need one specific person.

Pick one:

- Solo founders shipping MVPs with vibe coding tools

- Agency owners selling MVP builds to non-technical clients

- Ops managers at Shopify brands doing 7 figures

Then answer three questions about that person:

Where do they hang out? Not "the internet." Specific subreddits, Slack communities, Twitter circles, newsletters. If you can't name 3 places where your buyer spends time, you can't reach them.

What triggers them to buy? There's a moment when someone goes from "I should probably deal with this" to "I'm buying something today." For startup validation, it's usually "I just quit my job to build this" or "I'm about to spend $5K on development." What's the trigger for your buyer?

What have they tried before? If the answer is "nothing," your problem might not be painful enough. If the answer is "three other tools and none worked," you have a positioning opportunity. If the answer is "they built an internal spreadsheet," you know what you're replacing.

The output of Day 1 is one paragraph describing your buyer so specifically that you could find 10 of them on LinkedIn in 15 minutes. If you can't, narrow it down.

Day 2: Reality check with sourced data

This is the most important day. And the one where most founders lie to themselves.

You need four types of signals, and every one of them needs a source you can verify:

Search demand and CPC

Google Trends tells you if interest is growing or declining. Google Ads Keyword Planner gives keyword ideas, monthly search estimates, and cost ranges. High CPC does not guarantee a great business, but it is a strong demand signal when paired with buyer pain.

What to check: your core problem keyword, your category keyword, and your top competitor's brand name. If all three are flat or declining, that's a warning.

Competitors

Not "are there competitors?" There are always competitors. The questions that matter: How many? What do they charge? Where are their weaknesses? When did they launch? Have they raised money?

Check Crunchbase for funding data. Check G2 and Capterra for user reviews. Check Product Hunt for launch traction. Check their pricing pages directly.

If you find zero competitors, that's usually bad news, not good news. It means either nobody has tried (because the market doesn't exist) or you're not searching hard enough. I wrote a full guide on how to find competitors for your startup.

Pricing benchmarks

What do people pay today for the closest alternative? Not what you think they should pay. What they actually pay. Check competitor pricing pages, G2 reviews that mention price, and Reddit threads where people discuss costs.

This grounds your Day 0 claim. If you said "founders will pay $49/month" but every competitor charges $19 one-time, your pricing assumption needs evidence.

Community proof

Are real people asking for this? Search Reddit, Indie Hackers, Hacker News, Twitter, and niche forums for your problem keywords.

What counts: threads where someone describes the pain and asks for a solution. What doesn't count: your own post asking "would you use this?" (that's a leading question that generates polite lies).

Can you trust AI tools for startup validation?

If you use any AI tool for Day 2 research (and you probably should for speed), apply one rule: can you click through to the source?

If a tool tells you "your main competitor is XYZ and they raised $2M," can you click and see the Crunchbase page? If a tool says "search volume for your keyword is growing 40% year-over-year," can you see the Google Trends chart?

If yes, the data is useful. If no, treat it as a hypothesis to verify manually.

This is where most AI validation tools fail. They generate confident-sounding market analysis from an LLM, but the numbers aren't connected to anything real. One Trustpilot reviewer compared AI-generated research to a sourced validation report and called the difference "much different from the deep research results I got from ChatGPT or Claude." The gap isn't quality of writing. It's verifiability.

For a breakdown of which tools source their data and which don't, see my comparison of 8 startup validation tools.

Day 3: Identify the top 2 risks

Every idea has dozens of risks. You can't address them all before building. But you can identify the two that would kill the idea fastest.

Most startup ideas fail for one of these reasons:

Nobody cares (urgency risk). The problem exists but it's not painful enough to pay for. People have workarounds. They've lived with the pain for years and they're fine. If your Day 2 research shows no community threads complaining about this problem, urgency risk is high.

Distribution is unclear (reach risk). You can't find your buyers efficiently. The market is fragmented. There's no single channel where your exact customer congregates. If your Day 1 exercise couldn't name 3 specific places your buyer hangs out, reach risk is high.

It's too crowded (differentiation risk). Multiple well-funded competitors already do what you're proposing. Your Day 2 research found 10+ competitors with similar features and similar pricing. If you can't articulate a specific wedge in one sentence ("I'm the only one that does X"), differentiation risk is high.

Pick your top 2. Write them down. Days 4 and 5 are designed to stress-test those specific risks.

Day 4: Talk to 10 buyers

Not users. Not friends. Buyers. People who match your Day 1 profile and have the budget and authority to pay for what you're building.

The questions that matter:

"How do you solve this today?" This reveals your real competition. It's rarely another software tool. It's usually a spreadsheet, a VA, a manual process, or "I just don't do it." That answer shapes your positioning.

"What's annoying about it?" Listen for emotional language. "It's fine" means no pain. "I hate it but I'm stuck" means opportunity. "I tried three tools and they all sucked" means strong demand with bad supply.

"What would make you switch?" This tells you what feature or outcome actually matters. It's almost never what you think. Founders assume people want more features. Buyers usually want fewer steps, faster results, or lower risk.

"What have you paid for in the past?" This grounds your pricing. If they've paid $200/month for a similar tool, your $29 one-time price will feel cheap (good for conversion, bad for revenue). If they've never paid for anything in this category, you have an education problem.

Your goal is not to pitch. It's to collect real language. The words buyers use to describe their pain become your landing page copy. The objections they raise become your FAQ. The alternatives they mention become your competitive positioning.

10 conversations is the minimum. You'll start seeing patterns by conversation 6-7.

Day 5: Pre-sell

Validation is money, not compliments.

"That sounds cool" = worthless. "Here's my credit card" = validated.

You don't need a product to pre-sell. You need an offer. Three formats that work:

Paid pilot. "I'll do this manually for your first 3 clients for $X." This validates demand and teaches you the workflow before you automate it.

Concierge version. "I'll deliver the result by hand this week for $X." Higher touch, but proves willingness to pay.

Paid audit. "I'll analyze your [situation] and give you a report with recommendations for $X." This is especially effective for B2B. Agencies and consultants understand paying for expertise.

Set a specific target. "If 2 out of 10 conversations convert to a paid pilot, I build. If 0 do, I kill it."

The price doesn't have to be your final price. It just has to be non-zero. Free pilots don't validate anything. People will say yes to free forever.

Days 6-7: Decide

You have data now. Not opinions. Not AI-generated confidence. Actual signals from real people and real sources.

If you got paid: Build the minimum version that fulfills the promise you sold. Not the full vision. The specific thing someone paid for. Ship in 2-4 weeks.

If you got strong pain but no money: The problem is real but your offer is wrong. Change the price, the packaging, or the scope. Run Day 5 again with the new offer. Give yourself one more cycle before you move on.

If you got neither (no pain, no money): Kill it. This is the hardest part and the most valuable. You saved yourself 3-6 months of building something nobody wants. That clarity is worth more than any product.

Don't negotiate with the data. If 10 conversations produced zero buying signals, the answer is clear. The next idea might be the one. But this one isn't.

The fast start (if you want Day 2 done in 60 seconds)

The manual version of Day 2 takes 2-3 hours per idea. If you're comparing multiple ideas or want to skip straight to the highest-risk assumptions, run a sourced scan first.

I built Preuve AI to pull from 40+ live data sources and deliver a scored report in 60 seconds. Competitors with names, pricing, and weaknesses. Market sizing with sources. Demand signals from Reddit, Hacker News, and Google Trends. Every number links to where it came from.

It won't replace Days 4-5. Nothing replaces talking to buyers. But it catches the red flags that save you from wasting the other 6 days on an idea the data already says no to.

The free scan covers the basics: viability score, top blockers, and a competitor preview. If the output changes how you'd spend the week, that's your answer.

For agencies running this process on client ideas, see how to pre-qualify startup leads before a discovery call.

Want the narrower paths? Use the no-build startup validation guide if you want to validate without writing code, the product idea validation guide if the problem is solution-first, and the SaaS validation guide if pricing, distribution, and switching behavior are central.

Once the validation signal is real, tighten the next two assumptions with competitor research and TAM, SAM, and SOM sizing.

Final note

Building is cheap now. Any founder can ship an MVP in a weekend with AI tools.

That's exactly why validation matters more than it ever has. When the cost of building approaches zero, the cost of building the wrong thing is 100% opportunity cost. Every weekend you spend on an idea nobody wants is a weekend you didn't spend on the one that works.

Execution is cheap. Distribution and differentiation aren't. Spend 7 days finding out if anyone cares. Then build.

Frequently Asked Questions

How long does it take to validate a startup idea?

7 days if you follow this checklist. Day 0-1: define the claim and buyer. Day 2: sourced research. Day 3: identify risks. Day 4: talk to 10 buyers. Day 5: pre-sell. Days 6-7: decide. The research phase (Day 2) can be compressed to 60 seconds with a sourced validation tool, but buyer conversations cannot be skipped.

Can I validate a startup idea with AI?

Partially. AI tools speed up market research, but most hallucinate competitor names, market sizes, and pricing. The rule: if you can't click through to the source, assume the number is wrong. Use AI for speed, then verify every claim that matters.

What is the biggest mistake founders make when validating ideas?

Confirmation bias. They search for evidence that supports their idea and stop when they find it. Real validation means actively looking for reasons NOT to build. If the idea survives that, it's worth pursuing.

How many customer interviews do I need?

Minimum 10 conversations with potential buyers (not friends, not users, buyers). You'll start seeing patterns by conversation 6-7. The goal is not to pitch but to collect real language about their pain and buying behavior.

What if my validation shows my idea won't work?

That's the most valuable outcome. You saved 3-6 months of building something nobody wants. Kill it, move on. The next idea might be the one. Every successful founder has a graveyard of killed ideas behind them.

Is talking to friends and family good enough for validation?

No. Friends-and-family feedback has zero predictive value. They like you. They'll say it sounds great. They have no idea what the market looks like or whether anyone would pay. Someone saying "that sounds cool" is not a buying signal. Someone handing you money is.

Want to run this process in 60 seconds?

Preuve AI analyzes your startup idea against live market data using the same validation frameworks investors use.

Scan My Idea (Free)Free audit. Takes 60 seconds.