TL;DR

ChatGPT shipped memory in September 2024, Claude in October 2025. Mem0 hit 51,000 GitHub stars and 93.4% on LongMemEval. claude-mem crossed 46,100 stars and MemPalace 41,200 stars in weeks. The vector database market is on a 27.5% CAGR, on track to nearly triple from $3.2B to $8.95B by 2030. This page collects 60+ verified statistics on AI memory systems for 2026, every number sourced from peer-reviewed papers, vendor benchmarks, or primary surveys.

- Mem0's 2026 token-efficient algorithm hits 93.4% on LongMemEval and 91.6% on LoCoMo while averaging under 7,000 tokens per retrieval - vs 25,000+ for full-context (Mem0 Research, 2026).

- 57% of organizations have AI agents in production in 2026, but quality (33%) and latency (20%) remain the top blockers - both downstream of memory recall (LangChain State of Agent Engineering 2026).

- Anthropic completed Claude memory rollout to all paid users on October 23, 2025, joining ChatGPT and Gemini.

- The vector database market reached $3.2B in 2025 and is projected to hit $8.95B by 2030 at 27.5% CAGR (MarketsandMarkets).

I run a multi-LLM pipeline for a living. At Preuve AI I route across Claude, Gemini, GPT, Grok and Exa to validate startup ideas with primary sources, and the question I get most from journalists in 2026 is no longer "which model is best" - it is "how do you make any of these remember anything." If you searched "best Claude Code memory system" this year, you got buried under Mem0, Letta (MemGPT), Zep, MemPalace, claude-mem, LightRAG, Open Brain and Karpathy's LLM Wiki. They are not all the same thing, and the benchmarks are not directly comparable.

I built this roundup of where AI memory stands in May 2026, sourced from the academic papers, vendor benchmarks and analyst reports I cite myself when reporters call. The same sourcing discipline runs through my AI coding models statistics roundup and our 1,000-startup analysis. Every stat below lists a named source and a year. If I could not trace a number to a real research source, I dropped it.

In this report

- Six numbers behind the TLDR

- ChatGPT, Claude and Gemini memory adoption

- Open-source memory frameworks: Mem0, Letta, Zep, MemPalace, claude-mem

- LongMemEval, LoCoMo and BEAM benchmarks

- Context rot and lost-in-the-middle research

- Vector database market

- Enterprise agent memory adoption

- Karpathy's LLM Wiki pattern

- Where AI memory is heading by 2030

- FAQ

Six numbers behind the TLDR

The TLDR has the headlines. Six more numbers fill in the cost-performance picture, the academic gap, the user base behind ChatGPT memory, and the open-source flywheel.

- Mem0's ECAI 2025 paper measured 91% lower p95 latency (1.44s vs 17.12s) and 90% lower token cost than full-context on LOCOMO (arXiv:2504.19413).

- Commercial chat assistants and long-context LLMs show a ~30 point accuracy drop on LongMemEval vs oracle retrieval (Wu et al., ICLR 2025).

- ChatGPT memory now reaches 700M weekly active users (OpenAI / IntuitionLabs, August 2025).

- Mem0: 51,000+ GitHub stars, $24M raised by October 2025 (Mem0 / State of AI Agent Memory 2026).

- claude-mem: 46,100 GitHub stars within weeks of launch (Augment Code).

- MemPalace: 41,200 GitHub stars, nearly doubled from 23K in April 2026 (danilchenko.dev).

ChatGPT, Claude and Gemini memory adoption

The most under-reported finding of the last 24 months is that all three frontier chat assistants now ship persistent memory. OpenAI began testing ChatGPT memory in February 2024 with a small subset of Free and Plus users, then made it generally available to Free, Plus, Team and Enterprise on September 5, 2024 (OpenAI). On April 10, 2025, OpenAI expanded ChatGPT memory to reference all past chats for Plus and Pro tiers; the lightweight free-tier version followed June 3, 2025. Combined with ChatGPT's 700M weekly active users (August 2025), this is the largest deployment of LLM persistent memory to date.

Anthropic shipped Claude memory in two waves. September 10, 2025: Team and Enterprise plans got memory first (Computerworld). October 23, 2025: rollout completed to all Pro and Max subscribers (CNET, AI Business). Free-tier Claude users still do not have memory as of May 2026. The architectural choice that stood out to Forrester analysts: Claude uses project-scoped memory with strict isolation, not a global persistent profile - meaning context cannot leak between projects. ChatGPT and Gemini both default to global persistent. Demand Signals' internal testing reports a 60% reduction in prompt-engineering time for recurring tasks once Claude memory is active.

Memory feature rollout timeline

| Provider | Tier GA | Free tier? | Memory scope |

|---|---|---|---|

| ChatGPT | Sep 5, 2024 (paid + free) | Yes (enhanced Jun 2025) | Global persistent profile |

| Claude | Sep 10, 2025 Team/Ent; Oct 23, 2025 Pro/Max | No (May 2026) | Project-scoped, isolated |

| Gemini | 2024 | Yes | Global persistent |

Open-source memory frameworks: Mem0, Letta, Zep, MemPalace, claude-mem

Below the chat assistant layer, an open-source landscape exploded in 2025-2026. Five frameworks now matter: Mem0 (production-grade cross-tool layer), Letta/MemGPT (stateful agent framework with academic roots), Zep (temporal knowledge graph), MemPalace (local-first verbatim storage), and claude-mem (Claude Code IDE plugin). They are not interchangeable. They answer different questions about where memory lives and how the LLM retrieves it.

Mem0 leads on adoption. 51,000+ GitHub stars, $24M in funding by October 2025, and "used by 100,000+ developers" across YC portfolio companies and Fortune 500 customers per the State of AI Agent Memory 2026 report. The architecture is a three-tier system - user, session, and agent memory scopes - backed by a hybrid vector + graph + key-value store. When facts conflict, Mem0 self-edits rather than appending duplicates. The 2026 token-efficient algorithm closed the gap with Zep on temporal retrieval (older Mem0 scored 49.0% vs Zep's 63.8% on LongMemEval; the new algorithm hits 93.4%, per Mem0 Research).

Letta (formerly MemGPT) leads on academic credibility. Originally a UC Berkeley research project that went viral, the open-source framework now has 13,000+ GitHub stars and raised a $10M seed led by Felicis with backing from Jeff Dean (Google DeepMind), Clem Delangue (Hugging Face) and Cristobal Valenzuela (Runway). Letta Code is the #1 model-agnostic open-source agent on Terminal-Bench, the leading AI coding benchmark, leveraging memory-first architecture. Letta is white-box and model-agnostic by design.

Zep wins on temporal reasoning. Its Graphiti temporal knowledge graph engine maintains a timeline of facts and relationships including periods of validity. The Zep paper (arXiv:2501.13956) reports Zep beats MemGPT 94.8% vs 93.4% on the Deep Memory Retrieval (DMR) benchmark, and delivers up to 18.5% accuracy improvement on LongMemEval and 90% lower response latency vs full-context baselines using gpt-4o.

MemPalace leads on local-first. Co-created by actress Milla Jovovich and developer Ben Sigman, MemPalace stores conversations verbatim (no LLM summarization at write time) and runs entirely locally on ChromaDB + SQLite at zero API cost - vs Mem0 ($19-249/month) and Zep ($25+/month). MemPalace posts 96.6% Recall@5 on LongMemEval, and the repo nearly doubled from 23,000 to 41,200 GitHub stars in April 2026 alone. The honest caveat: an independent April 2026 audit (GitHub Issue #29 by dial481) confirmed the score is reproducible but largely attributable to ChromaDB's default all-MiniLM-L6-v2 embedding model rather than the palace spatial metaphor itself. The team walked back earlier "100% benchmark" claims after community pushback.

claude-mem leads on Claude Code IDE. The thedotmack/claude-mem plugin hit 46,100 GitHub stars by 2026 (Augment Code). It records 5 lifecycle hooks (SessionStart, UserPromptSubmit, PostToolUse, Stop, SessionEnd) and uses Claude's agent-sdk + ChromaDB hybrid vector search with local all-MiniLM-L6-v2 embeddings - no external API calls. agentmemory's BM25+Vector hybrid retrieval reaches 95.2% Recall@5 and 98.6% Recall@10 on LongMemEval, with BM25-only baseline at 86.2% R@5 - showing how much hybrid search adds over single-method retrieval.

LongMemEval, LoCoMo and BEAM benchmarks

Three academic benchmarks now anchor the AI memory race. Each tests a different shape of the problem, and a system can lead on one and trail on another.

LongMemEval (Wu et al., ICLR 2025) is the leading benchmark for long-term memory in chat assistants. It contains 500 curated questions across 5 ability dimensions: information extraction, multi-session reasoning, temporal reasoning, knowledge updates, and abstention. LongMemEval-S contains ~48 sessions per question and ~115K tokens per scenario; LongMemEval-M scales up to 1.5M tokens. The headline finding: commercial chat assistants and long-context LLMs show a 30% accuracy drop on LongMemEval vs oracle retrieval. Oracle GPT-4o reaches 92% accuracy when given only the answer-containing sessions; in the full interactive mode the same model drops to ~58%, a 34-point gap.

LoCoMo (Maharana et al., ACL 2024) tests very long-term conversational memory. 50 conversations averaging 300 turns and 9,000 tokens across 19-35 sessions - 9x larger than the prior MSC benchmark. Even strong long-context LLMs and RAG approaches lag behind human performance, particularly on temporal reasoning. BEAM rounds out the set with production-scale evaluations: Mem0's BEAM scores are 64.1% at 1M tokens and 48.6% at 10M tokens, showing how recall degrades at enterprise scale.

The single most important number for production teams is the cost-performance crossover. Full-context inference reaches 72.9% accuracy on LOCOMO at 9.87s median latency and ~26K tokens per query. Mem0's selective pipeline reaches 66.9% accuracy at 0.71s median latency with ~1.8K tokens. That is a 6-point accuracy trade for a 90% token cost cut and 91% latency cut. RevisionDojo and OpenNote real-world deployments report 40% token cost reductions in production. Even with prompt caching at 90% input-token discount, per-turn cost of long-context inference grows linearly with context length, while memory-system per-turn read cost stays at ~$0.0013 per query (arXiv:2603.04814).

Headline LongMemEval scores (2026)

| System | Score | Metric | Source |

|---|---|---|---|

| MemPalace (raw) | 96.6% | Recall@5 retrieval-only | MemPalace.tech / danilchenko.dev, 2026 |

| agentmemory (BM25+Vector) | 95.2% | Recall@5 retrieval-only | rohitg00/agentmemory, 2026 |

| Mem0 2026 algorithm | 93.4% | End-to-end QA | Mem0 Research, 2026 |

| Oracle GPT-4o | 92.0% | End-to-end QA, oracle conditions | Wu et al., 2024 |

| Zep (gpt-4o) | ~80% | End-to-end QA, +18.5% over baseline | Zep paper, arXiv:2501.13956 |

| Mem0 base (older) | 49.0% | End-to-end QA | Zep blog, 2025 |

Scores not directly comparable: Recall@5 is retrieval-only (does the gold session appear in top-5 candidates), end-to-end QA includes the answer generation step. Both matter for different reasons.

Context rot and lost-in-the-middle research

The reason memory systems exist in the first place is that long contexts degrade attention - and the degradation has architectural roots that 2025-class long-context models have not fully solved. Liu et al. (TACL 2024) ran the canonical experiment and measured a 30%+ accuracy drop on multi-document QA when the answer document moves from position 1 to position 10 in a 20-document context. Performance is highest when relevant information sits at the beginning OR end of input context, forming a U-shaped curve mirroring the human serial-position effect first documented by Ebbinghaus in 1913.

The follow-up "Found in the Middle" paper (arXiv:2403.04797) confirmed the U-shape persists even after randomly shuffling document order, proving the bias is positional, not content-based. Chroma's 2025 study tested 18 frontier models including GPT-4.1, Claude Opus 4 and Gemini 2.5; context rot is reduced but not eliminated even in 2025-class long-context models. The architectural roots are now well-understood: RoPE (rotary positional embedding) has long-term decay, and softmax-based attention disproportionately allocates attention to initial tokens regardless of relevance (Xiao et al., StreamingLLM, 2023).

The cost angle is what makes this matter for production teams. Even with prompt caching at 90% input-token discount, per-turn cost of long-context inference grows linearly with context length, while memory-system per-turn read cost stays at ~$0.0013 per query (arXiv:2603.04814 "Beyond the Context Window"). For any agent that re-engages with the same context multiple times - which is most production agents - the memory system is structurally cheaper.

Vector database market

Every AI memory system I have benchmarked above runs on top of a vector database. The infrastructure layer beneath them is now a $3.2B market on a 27.5% CAGR, projected to hit $8.95B by 2030 per MarketsandMarkets.

The 2026 leaderboard tightened in March. Pinecone hit 4,000 paying customers with $138M total funding at a $750M valuation. Qdrant closed a $50M Series B in March 2026; the open-source Rust engine consistently uses 2-3x less memory than Go-based competitors and posts P95 latency of 22ms at 10M vectors vs Pinecone's 45ms. Turbopuffer raised $50M Series A and posts the fastest published throughput: 1,100 QPS at 5ms P50 latency, beating Pinecone (850 QPS / 12ms) and Qdrant (920 QPS / 8ms) per Ailog's 2026 comparison.

The cost story matters as much as the performance story. pgvector remains the cheapest option below 50M vectors because it piggybacks on existing PostgreSQL infrastructure - companies including Supabase, Neon and Instacart run pgvector in production. Pinecone reduced rates by 30% in 2025; Forrester analyst Sophie Martin predicts 2-3 major vector database acquisitions by end of 2026 as hyperscalers (AWS, Azure, GCP) strengthen native offerings.

2026 vector database market share and performance

| Vector DB | Market share | P95 latency (10M vec) | GitHub stars |

|---|---|---|---|

| Pinecone | 28% | 45 ms | Closed-source |

| Qdrant | 18% | 22 ms | 29,000+ |

| Weaviate | 14% | ~50 ms | 14,000+ |

| Milvus | 12% | ~68 ms | 32,000+ |

| Chroma | 8% | N/A | 24,000+ |

Enterprise agent memory adoption

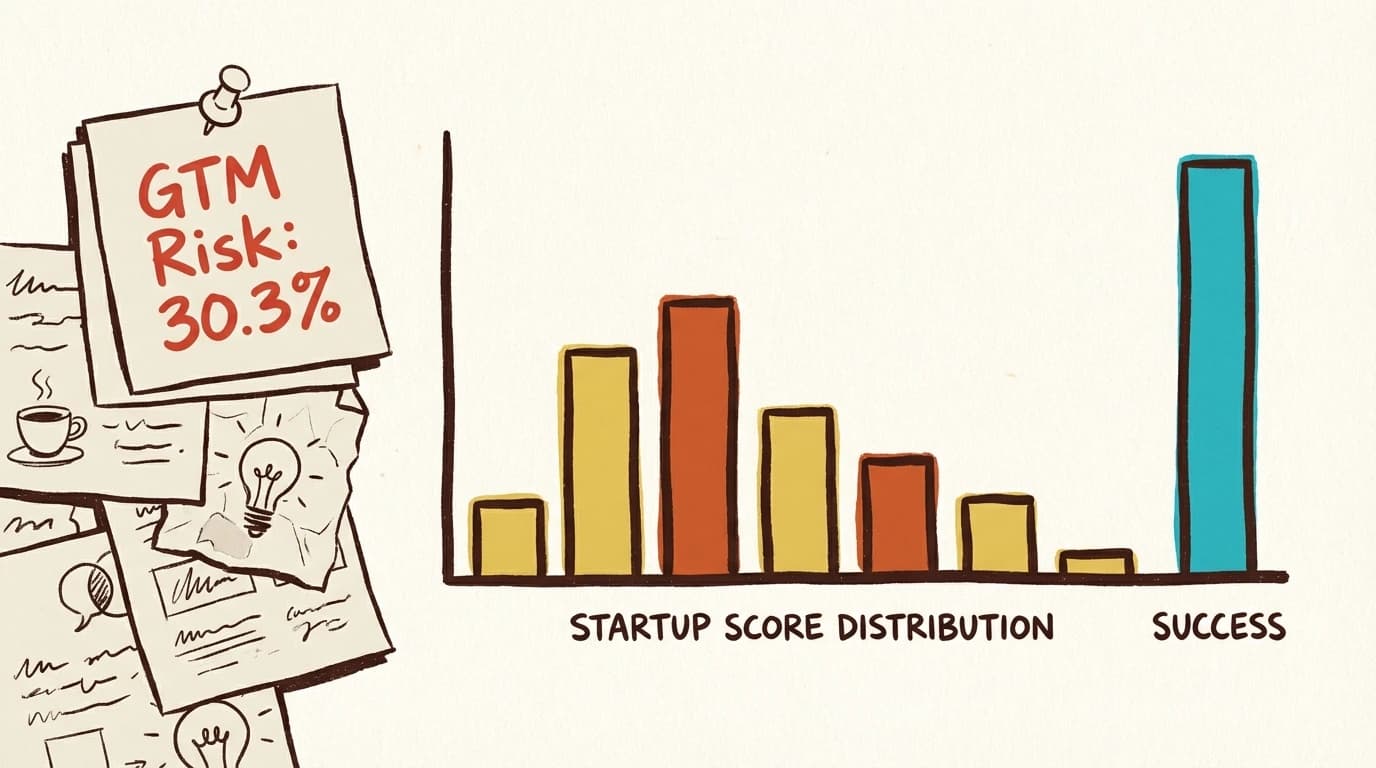

Two 2026 enterprise surveys frame how memory plays out in production. LangChain surveyed 1,300+ professionals and found 57% of organizations have AI agents in production in 2026, with quality (33%) and latency (20%) the top two production blockers - both downstream of memory recall. 94% of organizations with agents in production have observability; 71.5% have full tracing - the prerequisite for debugging memory failures.

Datadog's State of AI Engineering 2026 adds the multi-model angle. Agent framework adoption nearly doubled YoY: 9% of orgs in early 2025 to 18% by early 2026. 70%+ of organizations now run 3 or more LLM models in production, with the share running 6+ models nearly doubling YoY - making cross-model memory portability a critical concern. Datadog reports 8.4 million LLM rate-limit errors in March 2026 alone, accounting for 30% of all LLM call errors and exposing capacity-ceiling fragility for memory-augmented agents.

The McKinsey number that ties this section together: only 6% of organizations qualify as true AI high performers (more than 5% of EBIT attributable to AI). The gap between broad adoption (57% in production) and genuine impact (6% high performers) correlates with whether agents retain learned context across sessions.

Karpathy's LLM Wiki pattern

In April 2026, Andrej Karpathy (co-founder of OpenAI, former AI lead at Tesla, the person who coined "vibe coding") posted "LLM Knowledge Bases" on X and described a workflow shift: instead of using LLMs to generate code, he had been using them to build personal knowledge wikis. The tweet got 16M+ views and his GitHub Gist on the pattern hit 5,000+ stars within days.

Karpathy's framing reorganized how I think about memory. He described three phases of human-AI collaboration: Vibe Coding (Feb 2025), Agentic Engineering (Jan 2026), LLM Knowledge Bases (Apr 2026). The pattern in plain terms: Obsidian is the editor, the LLM does the writing and bookkeeping, the wiki is the artifact. You curate sources and ask questions. The LLM summarizes, cross-references and files.

Karpathy's own LLM-managed wiki on a single research topic grew to ~100 articles and 400,000 words (longer than most PhD dissertations) without him writing any of it directly. Open-source implementations multiplied within weeks: Ar9av/obsidian-wiki and 7xuanlu/origin among the most popular community frameworks.

Where AI memory is heading by 2030

Three forecasts I treat as planning baselines. First, the graph-vs-vector race: GraphRAG-Bench (ICLR 2026) provides the first systematic evaluation of when graph memory beats traditional RAG; LightRAG and GraphRAG outperform NaiveRAG, HyDE and RQRAG on roughly 80% of queries on the largest Legal dataset. Expect graph-native memory frameworks (Letta, Zep, mem0 graph tier) to take share from pure-vector retrievers in 2027-2028.

Second, pricing pressure: Pinecone reduced rates by 30% in 2025; new entrants like Turbopuffer break pricing further as commoditization pressures the layer beneath every memory system. Third, the moat thesis: a business that starts using AI memory today builds compounding business intelligence advantage over a competitor who starts in three months.

How I would use these statistics

The three numbers I lead with when writing about AI memory in 2026: Mem0's 90% token cost reduction (arXiv:2504.19413), 30% accuracy gap on LongMemEval (Wu et al., ICLR 2025), and 57% of orgs with agents in production but only 6% AI high performers (LangChain / McKinsey). Those three cover the cost-performance tradeoff, the academic problem statement, and the production reality.

If you cite this page, the canonical attribution is "Preuve AI, AI Memory Systems Statistics 2026." I update the framework section every time a new memory paper drops or a major framework crosses a star threshold. The 60+ stat list above and the source roundup below should give you the spine of any explainer, vendor comparison or trends piece. If you need a quote on memory architecture tradeoffs, multi-LLM stack design, or how a solo founder ships AI-heavy product, email me at vincent@preuve.ai.

FAQ

What is the best AI memory system in 2026?

There is no single winner. Mem0 is the most-adopted production framework (51,000+ GitHub stars, $24M raised) and now scores 93.4% on LongMemEval. Letta (formerly MemGPT) is the leading open-source stateful agent framework with 13,000+ stars and academic credibility. Zep wins on temporal knowledge graphs with up to 18.5% accuracy gain on LongMemEval. MemPalace leads the local-first / zero-API-cost category at 96.6% Recall@5. claude-mem is the leading Claude-Code-native plugin at 46,100 stars. Pick by use case: cross-tool production, agent framework, temporal reasoning, local privacy, or Claude Code IDE.

How much does AI memory reduce token cost vs full-context?

Mem0's published research shows 90% lower token consumption (1.8K tokens per query vs 26K for full-context) and 91% lower p95 latency (1.44s vs 17.12s) on the LOCOMO benchmark. The 2026 token-efficient algorithm averages under 7,000 tokens per retrieval call vs 25,000+ for full-context. Real-world deployments by RevisionDojo and OpenNote report 40% token cost reductions in production.

What is LongMemEval?

LongMemEval is the leading academic benchmark for long-term memory in LLM chat assistants, accepted at ICLR 2025. It contains 500 curated questions testing 5 abilities: information extraction, multi-session reasoning, temporal reasoning, knowledge updates, and abstention. Scenarios scale from 115K tokens (LongMemEval-S) to 1.5M tokens (LongMemEval-M). Commercial chat assistants drop ~30% in accuracy on LongMemEval vs oracle conditions.

What is the lost-in-the-middle problem?

The "lost in the middle" phenomenon (Liu et al., TACL 2024) is the U-shaped attention curve in long-context LLMs: performance is highest when relevant information sits at the beginning or end of input context and drops 30%+ when the answer is in the middle. The bias is architectural (RoPE decay, attention sinks) and persists in 2025-class models like GPT-4.1, Claude Opus 4 and Gemini 2.5 even though severity is reduced.

How big is the vector database market?

The vector database market reached $3.2 billion in 2025 and is projected to hit $8.95 billion by 2030 at a 27.5% CAGR (MarketsandMarkets). Pinecone leads with ~28% share, followed by Qdrant (18%), Weaviate (14%), Milvus (12%) and Chroma (8%). Pinecone hit 4,000 paying customers and Qdrant closed a $50M Series B in March 2026.

Does Claude have memory now?

Yes. Anthropic shipped Claude memory to Team and Enterprise plans on September 10, 2025, and to all Pro and Max users on October 23, 2025. Free-tier Claude users still do not have memory as of May 2026. Claude memory is project-scoped (no global persistent profile) and includes incognito chat, import/export, and granular per-memory editing. ChatGPT memory shipped earlier (September 2024 GA, June 2025 to free tier) and Gemini memory has been available since 2024.

Sources used in this report

Every statistic above traces back to a primary publisher. Hover any source for the exact URL, click to open the page in a new tab.

- Wu et al., LongMemEval (ICLR 2025, arXiv:2410.10813)

- LongMemEval ICLR 2025 poster

- xiaowu0162/LongMemEval GitHub

- Snap Research, LoCoMo (ACL 2024)

- Maharana et al., LoCoMo paper (arXiv:2402.17753)

- Mem0 paper, ECAI 2025 (arXiv:2504.19413)

- Mem0 token-efficient algorithm benchmarks (2026)

- Mem0, State of AI Agent Memory 2026

- mem0ai/mem0 GitHub

- Zep paper (arXiv:2501.13956)

- Zep, State of the Art Agent Memory

- letta-ai/letta GitHub (formerly MemGPT)

- Felicis seed announcement (Letta $10M)

- Letta Code (Terminal-Bench #1 OSS agent)

- MemPalace GitHub

- MemPalace.tech

- danilchenko.dev MemPalace independent review

- Nicholas Rhodes MemPalace benchmark review

- thedotmack/claude-mem GitHub

- Augment Code, claude-mem 46.1K stars

- Model Context Protocol official memory server

- doobidoo/mcp-memory-service GitHub

- rohitg00/agentmemory LongMemEval benchmark

- Liu et al., Lost in the Middle (TACL 2024, arXiv:2307.03172)

- Lost in the Middle (TACL 2024 publication)

- Found in the Middle follow-up (arXiv:2403.04797)

- Morph LLM, Lost in the Middle explained

- Beyond the Context Window (arXiv:2603.04814)

- OpenAI, Memory and new controls for ChatGPT

- OpenAI ChatGPT release notes

- IntuitionLabs ChatGPT plans 2026

- Computerworld, Anthropic Claude memory Team/Enterprise

- CNET, Claude memory Pro/Max rollout

- AI Business, Anthropic memory expansion

- Demand Signals, Claude memory 60% reduction

- The AI Track, Claude Memory Upgrade 2025

- LangChain State of Agent Engineering 2026

- Datadog State of AI Engineering 2026

- DEV Community, State of AI Agent Memory 2026

- MarketsandMarkets Vector DB Report 2025-2030

- swarmsignal.net Vector DB Comparison 2026

- Ailog Vector Database Trends 2026

- Groovy Web Vector DB Comparison 2026

- Andrej Karpathy on X, LLM Knowledge Bases

- Karpathy llm-wiki Gist

- MindStudio, Karpathy LLM Wiki explained

- Ar9av/obsidian-wiki community framework

- GraphRAG-Bench (ICLR 2026, arXiv:2506.02404)

- LightRAG paper (arXiv:2410.05779)

- Microsoft Research GraphRAG

A full audit trail with the exact URL behind every statistic on this page is published at /blog/_research/ai-memory-systems-statistics-2026.json. Every claim is traceable to a primary publisher.

Want to run this process in 60 seconds?

Preuve AI analyzes your startup idea against live market data using the same validation frameworks investors use.

Scan My Idea (Free)Free audit. Takes 60 seconds.