TL;DR

There are 3 types of startup idea validators: chatbots (fast, unsourced), scorecards (structured, often black-box), and sourced data tools (slower, verifiable). The 4 non-negotiables: clickable sources, live data, actionable output within 48 hours, and willingness to say "don't build." I built Preuve AI (formerly Test Your Idea) as a sourced data validator. 4,000+ ideas tested. 90% scored below 70.

A startup idea validator is a tool that evaluates a business concept's market viability by analyzing competition, demand signals, and risk factors, then returns a structured assessment to help founders decide whether to build, pivot, or move on.

There are at least a dozen startup idea validators now. Most launched in the last 18 months. Most do the same thing: you type an idea, you get a confident answer.

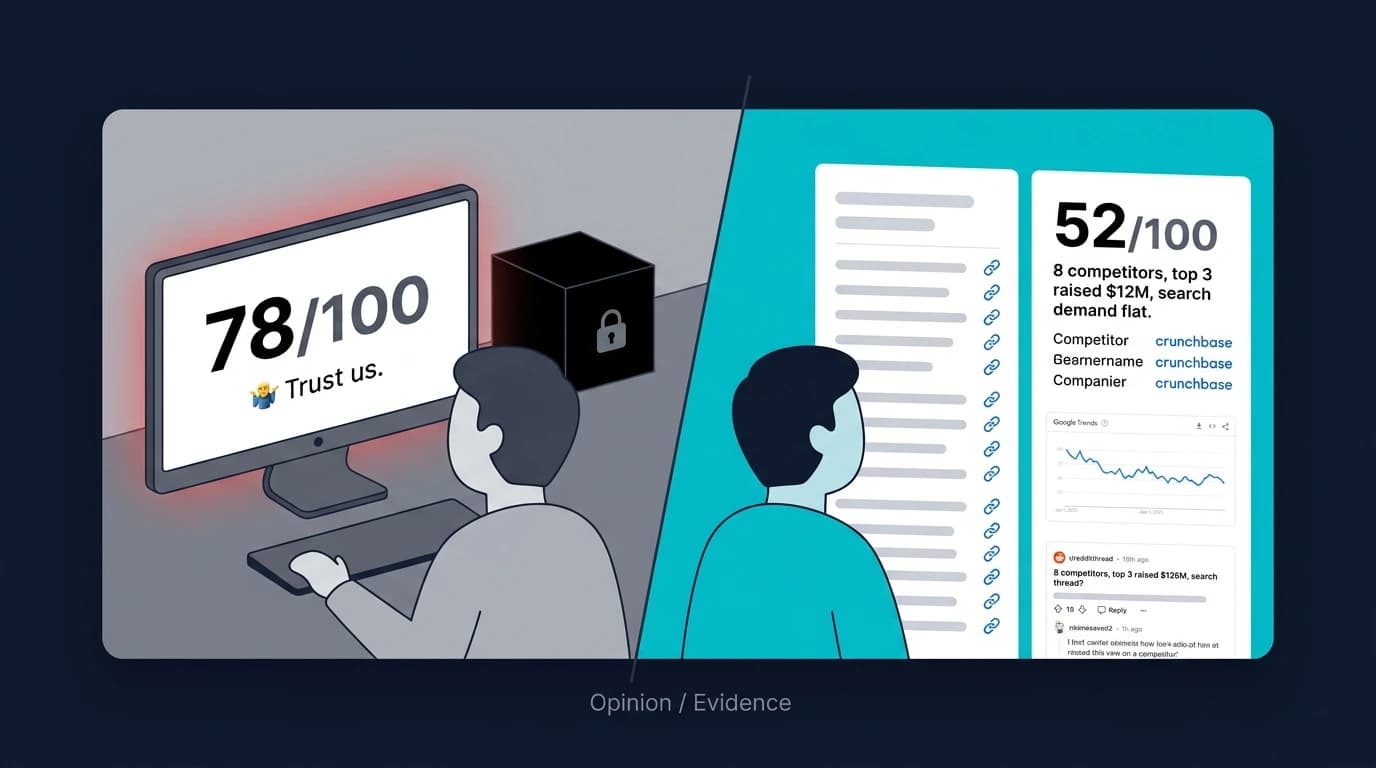

The problem isn't confidence. It's whether the confidence is connected to anything real.

This post breaks down the categories of tools, what separates the useful ones from the dangerous ones, and how to pick the right one for how you actually work. I built one of these tools (Preuve AI), so I have a bias. I'll be upfront about where it fits and where something else might be better.

What should a startup idea validator actually do?

Before comparing tools, it helps to define what "good" looks like.

A useful validator does three things:

Surfaces demand and competition signals fast. Are people searching for this? Who's already building it? What do they charge? These are the signals that matter before you spend a weekend coding. A good tool finds them in minutes instead of hours.

Shows where the data comes from. If the tool says "your market is $1.5B," can you click through and see the source? If it says "your main competitor raised $3M," can you verify that on Crunchbase? Sourced data is the difference between research and guessing. If you can't check the numbers, you can't trust the numbers.

Highlights risks you can test immediately. Not generic warnings like "you may face competition." Specific risks: "this market has 12 funded competitors, the top 3 raised over $10M combined, and your proposed price is 40% below the median." That's a risk you can evaluate in 5 minutes. "Consider market dynamics" is a risk you can't do anything with.

A good validator should NOT:

Convince you to build. If a tool says yes to every idea, it's a marketing funnel pretending to be a research tool. 43% of startups fail because of poor product-market fit (CB Insights, updated framing). 4,000+ ideas have been tested on Preuve AI. 90% scored below 70. The most common score bracket is 41-50. Any tool that mostly says "go for it" is optimized for your feelings, not your outcome.

Hide sources. A score without sources is an opinion. You can get opinions for free from friends, Twitter, and ChatGPT. What you can't get for free is sourced evidence organized into a decision framework.

Pretend it replaces customer conversations. No tool can tell you whether real people will pay. Tools find signals. Conversations confirm them. Any validator that positions itself as "all the validation you need" is overpromising.

What are the 3 types of idea validators?

Not all validators work the same way. Understanding the category helps you pick the right tool for the right moment.

Type 1: Chatbot validators

How they work: You describe your idea. An LLM generates analysis, SWOT charts, market commentary, and suggestions. Some add a conversational layer so it feels like talking to an advisor.

Examples: ValidatorAI, VenturusAI (Vera), or just ChatGPT/Claude with a validation prompt.

When they're useful: Brainstorming. Stress-testing your thinking. Getting a structured framework (SWOT, Porter's Five Forces) when you don't know where to start. Fast, low-friction, usually free or cheap.

When they're dangerous: When you treat the output as research. LLMs hallucinate competitor names, fabricate market sizes, and generate pricing data that doesn't exist. A peer-reviewed study in Nature's Scientific Reports found that ChatGPT fabricated 46.4% of scholarly citations it generated. They do this confidently, which is worse than being wrong and uncertain. A confident wrong answer stops you from doing real research.

The test: After the tool gives you a competitor name, Google it. If the company doesn't exist, the tool is generating fiction. This happens more often than you'd expect.

Type 2: Scorecard validators

How they work: You enter your idea (sometimes with additional parameters like target market, budget, or stage). The tool runs it through a scoring model and returns a structured report with a numerical score.

Examples: DimeADozen, Bizway, some features of Founderbounty.

When they're useful: When the scoring model is transparent and the report explains why you got that score. A score of 55 that says "differentiation risk is high because 8 competitors offer the same feature set at lower prices" is actionable. You know what to fix.

When they're dangerous: When the score is a black box. If you can't see how the number was calculated or what data fed into it, the score is decorative. A confident "72/100" with no explanation is worse than no score at all, because it creates false certainty.

The test: Does the tool show you the inputs and logic behind the score? Can you click through to verify any claim? If the answer to both is no, the score is an AI opinion with a number attached.

Type 3: Sourced data validators

How they work: The tool pulls from live external data sources (search trends, competitor databases, community forums, review platforms) and assembles findings with references. The output includes links you can click to verify each claim.

Examples: Preuve AI.

When they're useful: When you need to make a real decision. Choosing between two ideas. Deciding whether to spend 3 months building. Preparing data for a co-founder or investor conversation. Any situation where "I checked, and here's what the data says" matters more than "an AI thinks this might work."

When they're less useful: When you're in pure brainstorming mode and don't need verifiable evidence yet. When speed matters more than accuracy (a chatbot gives you something in 10 seconds; a sourced scan takes 60 seconds). When your idea is so early that there's no market data to find.

The test: Pick any claim in the report. Click the source link. Does it exist? Does it support the claim? If yes, the tool is doing research. If links are broken or missing, it's doing the same thing as a chatbot with better formatting.

What to look for in any validator (4 non-negotiables)

Regardless of which type you choose, check for these:

1. Clickable sources for every key claim

Market sizes, competitor names, funding amounts, pricing data, demand trends. Each of these should have a link. Not a footnote that says "source: market research." An actual URL you can open in a new tab.

Why this matters: LLMs are trained on internet data but don't cite it. They remix and recombine information in ways that lose the source. Purpose-built tools that pull from live databases (Crunchbase, Google Trends, G2, Product Hunt) can show receipts. That's the difference.

2. Recency

A market size figure from 2020 is useless in 2026. A competitor that shut down 18 months ago is irrelevant. Search trends from before COVID tell you nothing about today.

Check: does the tool pull live data, or is it working from a static training dataset? Google Trends, Crunchbase, and review platforms update continuously. An LLM's knowledge is frozen at its training cutoff.

3. Actionability within 48 hours

The output should tell you what to do this week. Not "consider your competitive positioning." That's a consulting euphemism for "we have nothing specific to say."

Good actionability looks like: "Your top competitor charges $39/report and their weakness is static PDFs with no live data. Test positioning around real-time sourced analysis at $29." You can act on that sentence today.

Bad actionability looks like: "Consider differentiating through unique value propositions." You cannot act on that sentence ever.

4. Willingness to say "don't build"

This is the one most people skip when evaluating tools. Run a clearly bad idea through the validator. Something with an obvious fatal flaw: a saturated market, declining demand, or a regulatory wall.

Does the tool catch it? Does it give a low score and explain why? Or does it find a way to be encouraging about everything?

A tool that says "great potential" to every idea is optimized for retention, not accuracy. You want the tool that occasionally ruins your day with a 34/100 and a clear explanation of why.

How do the major validators compare?

Here's how the major validators stack up on what matters:

| Tool | Type | Sources | Live Data | Best For |

|---|---|---|---|---|

| ValidatorAI | Chatbot | No | No | Brainstorming, not decisions |

| DimeADozen | Scorecard | No | No | Shareable PDF reports |

| VenturusAI | Chatbot | No | No | MBA-style frameworks |

| FounderPal | Scorecard | No | No | Quick first pass |

| Preuve AI | Sourced data | Yes (40+) | Yes | Data-backed decisions |

ValidatorAI: Chatbot approach. Conversational, encouraging, generates landing pages during the session. Large community (200,000+ users). Con: reputation for saying yes to everything. No source links. Best for brainstorming, not decisions.

DimeADozen: Scorecard approach. Produces long PDF reports (40+ pages). Polished formatting. Con: AI-generated content without verifiable sources. Reports are static. Best for founders who want a document to share.

VenturusAI: Chatbot with business frameworks (SWOT, PESTEL, Porter's). AI consultant "Vera" answers follow-ups. Con: frameworks feel academic. No live data sources. Best for MBA-style structured thinking.

FounderPal: Lightweight and fast. Good for a zero-friction first pass when you don't want to commit to anything. Con: less depth on competitors and market sizing. Best as a starting point.

IdeaBuddy: Planning-first, not validation-first. Better if you need a canvas, financial plan, business plan, and a guided workspace. Con: the public positioning is around planning structure, not source-linked market evidence. I wrote a full Preuve AI vs IdeaBuddy comparison if that is the tradeoff you are actually evaluating.

Preuve AI: I built this as a sourced data validator. It scans 40+ live sources (Crunchbase, Google Trends, Reddit, G2, Product Hunt, Capterra, Hacker News). Every key claim links to a source. 4,000+ ideas tested, 90% scored below 70. Con: pay-per-report model ($29), no unlimited plan. Best for decisions where you need verifiable evidence.

For a deeper breakdown of 8 tools with pros, cons, and pricing, I wrote a full comparison of the best startup validation tools for 2026.

If your search is more switch-focused than research-focused, start with the dedicated pages for IdeaProof alternatives, VenturusAI alternatives, the best validation tools 2026 roundup, or the full compare hub.

When is Preuve AI the right choice?

I'm the founder, so I'll be direct about where it fits and where it doesn't.

Pick Preuve AI when:

You're choosing between 2-3 ideas and need a tiebreaker grounded in data, not gut feel. The scored comparison across market signals, competition, and risk gives you a decision framework.

You need numbers you can defend. If you're sharing results with a co-founder, advisor, or investor, sourced data matters. "The report found 8 competitors, the top 3 raised a combined $12M, and search demand is growing 40% YoY, here are the links" is a different conversation than "ChatGPT said the market looks good."

You want honest assessment. I published my analysis of 1,000+ startup ideas and the score distribution is public: 90% below 70, mode in the 41-50 range. If your idea scores 34, the report tells you why and suggests specific pivots.

Don't pick Preuve AI when:

You're in pure brainstorming mode and need to riff on 50 variations quickly. A chatbot is better for that. The per-report model is designed for ideas you're seriously considering, not for rapid ideation.

Your idea is so novel that there's no existing market data. If no one has searched for your keywords, no competitor exists in any database, and no community has discussed the problem, there's nothing for the tool to find. Skip straight to customer conversations.

You want a business plan or pitch deck. The report is a validation document, not a plan. It tells you what's wrong and how to fix it. Building the plan is your job. (Though if you need one, I offer an Investor Package with pitch deck, financial model, and investment memo.)

The real validation stack

Even the best tool is one input. Here's the full process:

Step 1: Sourced scan. Run your idea through a tool that pulls live data. Get the competitive landscape, demand signals, and risk profile. This takes 60 seconds and catches the red flags that save you days. If the scan shows a dead market or 15 well-funded competitors, you skip to your next idea without burning a week.

Step 2: 10 buyer conversations. Not users. Not friends. People who match your target customer and have the budget to pay. Ask how they solve the problem today, what's annoying about their current approach, and what they've paid for in the past. The tool tells you what the market looks like. Conversations tell you what buyers actually want.

Step 3: Pre-sell. Before you build, sell. A paid pilot, a concierge version, a paid audit. If 2 out of 10 conversations turn into money, build. If 0 do, change the offer or kill the idea.

I wrote a full walkthrough of this process in how to validate a startup idea in 7 days.

For agencies running this process on client ideas at scale, see how to pre-qualify startup leads before a discovery call.

FAQ

What's the best free startup idea validator?

For a free first pass, FounderPal is fast and frictionless. Preuve AI's free tier gives you a viability score, top blockers, and a competitor preview sourced from 40+ live databases. The free tier on most chatbot tools (ValidatorAI, VenturusAI) is useful for brainstorming but not for verifiable market data.

Can I just use ChatGPT to validate my startup idea?

You can, but verify everything it outputs. ChatGPT will confidently name competitors that don't exist and cite market sizes with no source. If you use it, treat every claim as a hypothesis and Google each one individually. At that point, a purpose-built tool saves you the verification step. I wrote a detailed breakdown of Preuve AI vs ChatGPT if you want specifics.

How do I know if a validation score is trustworthy?

Check two things: can you see what data went into the score, and can you click through to verify the claims? If the tool shows a score of 72 with no explanation or sources, it's an AI opinion. If it shows 72 because search demand is growing 30%, 6 competitors exist with specific weaknesses, and community threads show active pain, that's a score you can trust.

How many ideas should I validate before committing?

Most successful founders test 2-5 ideas before finding the one that sticks. I offer 5-packs ($89, or $17.80 per report) and 10-packs ($149, or $14.90 per report) for this reason. Don't commit to your first idea. Commit to finding the right one.

Is a validator enough or do I still need to talk to customers?

You still need to talk to customers. Always. A validator finds market signals and competitive data at scale. Customer conversations find buying intent, emotional triggers, and willingness to pay. The validator makes your conversations better by giving you real data to reference. It doesn't replace the conversation.

What about idea validators for agencies?

If you're qualifying startup leads at scale (not validating your own idea), look for white-label options with a dashboard. I built an agency widget that embeds on your site, scores every inbound lead against 40+ data sources, and gives you a scored pipeline before discovery calls. The prospect gets a free market analysis. You get a pre-qualified lead with sourced evidence. Pricing starts at $149/month for 15 leads.

Want to see which type of validator fits your idea?

The free scan takes 60 seconds. You get a viability score, top blockers, and competitor preview with sources.

Scan My Idea Free