TL;DR

AI business context validation is the process of using AI agents to pull live market data, competitor evidence, and demand signals into a structured assessment of a business idea. It is different from asking ChatGPT: the data is live, sourced, and cross-validated across models. I built Preuve AI as exactly this kind of system. Here is what it actually checks, the 90-second test to try it, and where it still falls short.

Ask ChatGPT to rate your startup idea. It says the idea is "promising" and suggests some light refinements. The score is 7 or 8 out of 10. It always is.

Try it again with something absurd - "an app for dogs to rate their owners' cooking." Same output: promising, interesting angle, here are three features to consider.

Generic AI feedback on a startup idea is useless. The model has no context; it predicts what sounds encouraging. That is not validation. It is a congratulations machine.

AI business context validation is something else entirely. It is a retrieval problem, not a reasoning problem. The AI does not rate the idea from memory - it queries the world, in real time, for evidence that the idea has demand, real competition, and a reachable market. Then it tells you what that evidence actually says.

I've built a production system that does exactly this. 5,000+ ideas have run through it. Here is how it works, what it actually checks, and why most "AI validation" products do not do this at all.

The Preuve AI Score

The Preuve AI Score is a 0-to-100 startup viability rating computed by 10 AI agents cross-validating claims against 50+ live data sources including competitors, demand signals, market size, community sentiment, hiring trends, and patent activity. Every point is source-linked. A score above 70 indicates launch-ready signals. Below 40 means fundamental validation work remains.

What is AI business context validation?

AI business context validation is the process of using AI agents to pull live market data, competitor evidence, community sentiment, and demand signals into a structured assessment of a business idea's viability.

The distinguishing word is context. The AI is not rating the idea from its training data. It is retrieving current context from live sources and validating your assumptions against that evidence.

Three parts matter, and missing any one of them breaks the whole thing:

- Retrieval from live sources. Not the model's training set - the internet, today, filtered for signal.

- Multi-agent parallelization. Different agents for different evidence categories: demand, competition, market sizing, sentiment.

- Cross-model validation. The same question put to multiple models and then reconciled. This catches confident-sounding noise before it reaches the user.

Miss any of the three and you end up with something that looks like business context validation but behaves like a chatbot in a suit.

Why generic AI feedback on a startup idea is useless

Something that happened recently: a founder submitted a niche B2B SaaS idea. Two competitors had shipped similar products in the previous 6 months. ChatGPT's rating: 8/10, "strong opportunity, large market."

Those competitors weren't in ChatGPT's training data. The cutoff was earlier. The model had no way to know, so it praised the idea straight into a wall.

Three reasons generic AI feedback fails at validation:

- Knowledge cutoff. New competitors, new regulations, new market entrants, and new trends all sit outside the model's training data. Your market changes weekly. The model does not.

- No source trail. A number without a URL is a guess. Generic AI gives numbers. It does not cite them. You cannot check.

- Sycophancy bias. Models are trained to be helpful. Helpful looks like encouraging. Encouraging is not validation.

I wrote more on why this breaks at scale in how 10 AI agents validate a startup idea. The short version: you cannot get validation from a system that has no live access to the thing you are validating.

What AI agents actually check when they validate business context

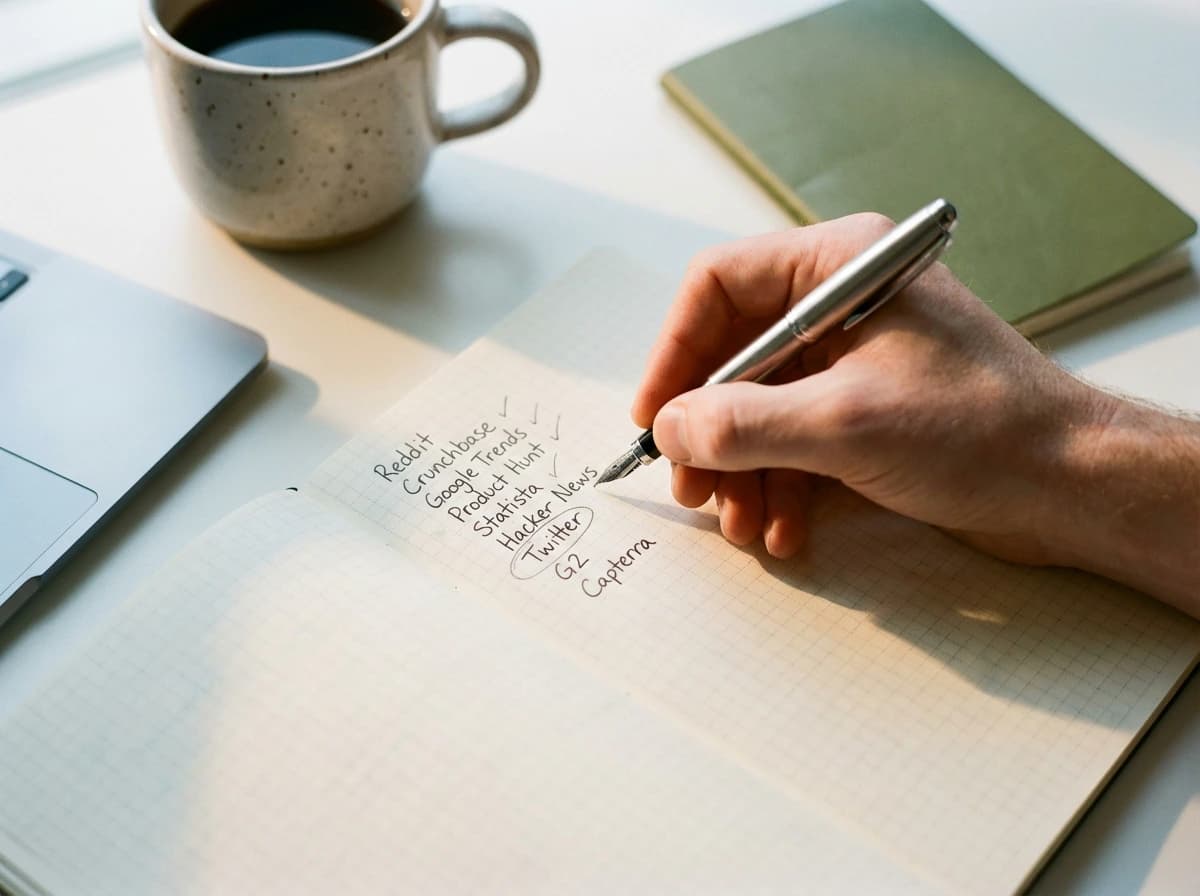

Inside Preuve AI, 10 agents run in parallel. Each owns a dimension. Combined, they query 50+ live sources. Here is what they pull.

| Dimension | Sources queried | What gets validated |

|---|---|---|

| Competitors | Crunchbase, G2, Capterra, BuiltWith, AngelList | Who ships, traction, funding, product-market fit signals |

| Demand signals | Reddit, Product Hunt, Google Trends, YouTube comments | Are real people describing this pain in their own words |

| Market sizing | Statista, government data, industry reports | TAM, SAM, SOM with sources, not vibes |

| Community sentiment | Twitter/X, Hacker News, niche subreddits, Discord | What the conversation looks like before you arrive |

| Leading indicators | Hiring posts, patent filings, news, press releases | Where the market is heading, not where it has been |

Every number in the final report links back to a source URL. That was the first design decision I made and the one I am most stubborn about: if you cannot audit a claim, it is not validation, it is just confident prose.

How is AI business context validation different from a chatbot?

The gap is architectural. A chatbot is one model reading your prompt and returning text. AI business context validation is a pipeline of agents retrieving live evidence, reasoning over it, and returning structured output with sources attached.

| Property | Generic AI chatbot | AI business context validation |

|---|---|---|

| Data freshness | Training cutoff, months or years old | Live, today |

| Source transparency | None | Every claim linked to a URL |

| Architecture | One model, one call | 10+ agents in parallel, cross-validated |

| Bias default | Sycophancy (encouragement) | Evidence-weighted (discouragement if warranted) |

| Output shape | Prose, opinion | Structured report, sections, scores |

Gartner estimates that of the 2,000+ companies claiming to build agentic AI, only about 130 are genuine. The rest are chatbots with relabeled marketing. Most "AI business validator" products fit the relabeled category. Read agent-washing: only ~130 of 2,000 companies are genuine for the fuller breakdown.

The 90-second AI business context validation test

You do not need to read another essay to check whether an AI validator is doing real context retrieval. Two questions cut through the noise.

- Ask it about a competitor that launched this week. Pick a company that shipped publicly in the last 7 days. If the validator does not mention it, or makes up a different company, it is not pulling live data. It is reading training data.

- Ask it to cite one claim with a source URL. Any claim. If it cannot produce a real URL that loads and supports the claim, the numbers are generated, not retrieved. Nothing it says can be trusted.

I ran this test on five widely-advertised "AI business validators" recently. Three failed the first question - no knowledge of a competitor I had shipped via my own company that same year. Four failed the second. The one that passed both is the one worth using. The startup validation benchmarks from 4,000+ ideas post has a longer version of this test.

Where AI business context validation falls short

I know what is wrong with my own product. These are the real limits, not the sanitized ones.

- New-category ideas. If nobody is talking about your thing yet (rare, but it happens in deep science and frontier research), demand signals will be thin. The report flags low-signal results. The founder still has to decide.

- Regulated or local markets. Healthcare regulation in Vermont, tax law in Portugal, a local zoning issue in Austin. Public web data is weaker here than in global SaaS. I mark these reports as directional, not definitive.

- Confident noise. Some sources lie confidently. Cross-validating across three models reduces this but does not eliminate it. Audit the source URLs before making a bet.

- Taste. Whether you want to spend three years on the problem is not a data question. The validator can tell you the market is there. It cannot tell you to care.

Use AI business context validation to kill bad assumptions before you spend a quarter building something that will not find buyers. Then go talk to 10 real prospective customers. My 5-step framework to validate without building walks through that sequence in order.

Frequently Asked Questions

What is AI business context validation?

AI business context validation is the process of using AI agents to pull live market data, competitor evidence, community sentiment, and demand signals into a structured assessment of a business idea's viability. The distinguishing word is "context." The AI is not rating the idea from its training data. It is retrieving current context from live sources and validating your assumptions against that evidence.

How is AI business context validation different from asking ChatGPT?

ChatGPT rates ideas from its training data, which has a knowledge cutoff and no citation trail. It tends to rubber-stamp most ideas because safe encouragement is the default output. AI business context validation retrieves live data in real time from 50+ sources (Reddit, Product Hunt, G2, Crunchbase, Google Trends, hiring data, patents, news), cross-validates claims across models, and every number links back to a source URL.

What sources does AI business context validation pull from?

At Preuve AI the system queries 50+ live sources organized into categories: competitor data (Crunchbase, G2, Capterra, BuiltWith), demand signals (Reddit, Product Hunt, Google Trends, YouTube comments), market sizing (Statista, government data, industry reports), community sentiment (Twitter/X, Hacker News, subreddits), and hiring/patent data as leading indicators. Ten specialized AI agents query these in parallel and cross-validate the results.

How long does an AI business context validation take?

A full Preuve AI scan takes 6-8 minutes of compute. The agents query their sources in parallel, not sequentially. For a founder, the wait is under 10 minutes; the alternative (manual research) is 10-20 hours and usually skipped.

When does AI business context validation fail?

Three failure modes. First, ideas so new that no community is discussing them yet produce thin demand signals (rare, but possible with deep-science plays). Second, regulated or local markets where primary data is not on the public web will underperform. Third, any AI system can be fooled by confidently-phrased noise; cross-validation across models reduces this but does not eliminate it. Treat the output as evidence, not a verdict.

Is AI business context validation enough to decide whether to build?

No. It is the fastest way to kill bad assumptions before you spend three months building. Idea validation from live data is step one. Talking to 10 prospective customers, putting up a landing page, and measuring intent are steps two through four. AI validates context. You still validate demand with human conversations.

Want to run this process in 60 seconds?

Preuve AI analyzes your startup idea against live market data using the same validation frameworks investors use.

Test My Idea (Free)Free audit. Takes 60 seconds.